NAO Robot Teacher Gender Study

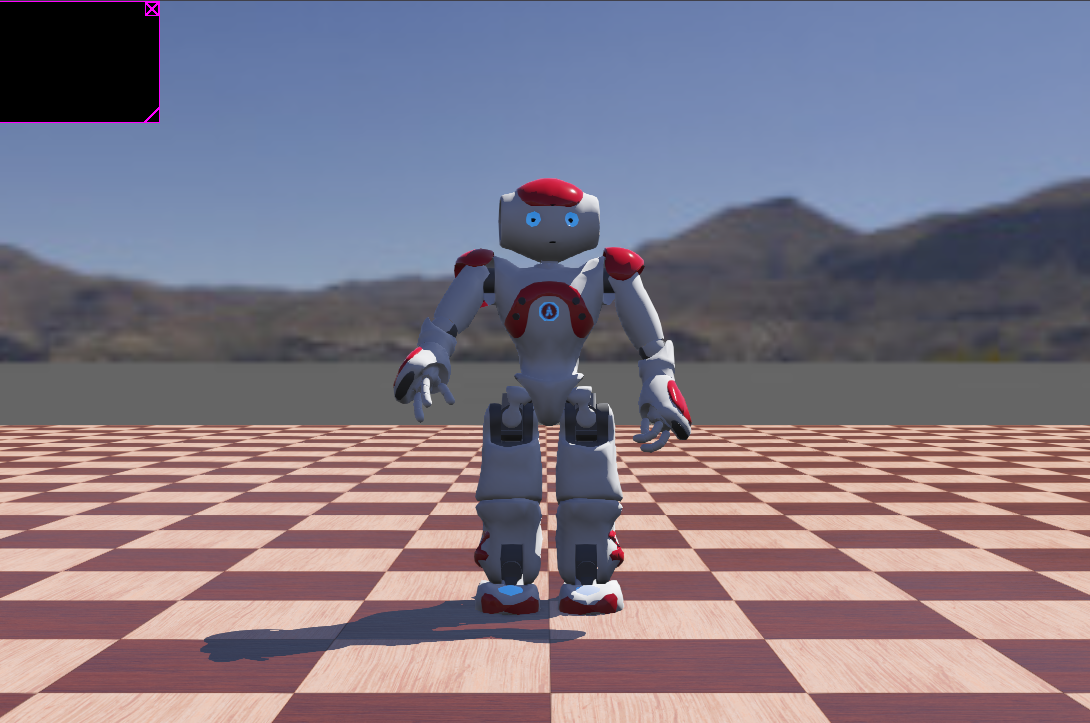

Webots simulation investigating whether human gender bias extends to humanoid robots in educational settings.

Overview

This project investigates a specific question at the intersection of social robotics and AI ethics: do students project human gender biases onto robotic instructors? As humanoid robots like NAO are increasingly deployed in educational and service contexts, understanding how their presentation — including assigned gender — affects human perception is crucial for designing fair and effective systems.

Research Question

When a humanoid robot is presented with gendered cues (voice pitch, name, pronouns), do human observers rate its competence, warmth, and authority differently? And does this pattern mirror documented gender bias in human teachers — where, for example, female instructors are rated higher on warmth but lower on authority?

Approach

Simulation Platform

The study uses the Webots robotics simulation platform with a NAO humanoid robot model. NAO was chosen because it is widely used in HRI research and has a well-characterized behavioral model, making it appropriate for controlled experiments where the robot's physical presentation can be systematically varied.

Gendered Presentation Conditions

Two experimental conditions were implemented:

- Condition A (Female-presenting): NAO uses a higher-pitch synthesized voice, a female name, and female pronouns in its scripted teaching interactions.

- Condition B (Male-presenting): NAO uses a lower-pitch synthesized voice, a male name, and male pronouns.

All other variables — lesson content, gesture repertoire, speaking pace, error rate — are held constant across conditions. The robot delivers the same structured lesson in both cases.

Behavioral Scripting

NAO's teaching behavior is implemented in Python using the Webots controller API. The script coordinates synchronized speech, gesture, and gaze — NAO turns toward the virtual student, gestures toward a simulated whiteboard, and delivers lesson content with appropriate pauses. The behavior tree was designed to feel naturalistic without introducing confounding differences between conditions.

Connection to Ethical AI

This project connects directly to broader questions in AI alignment and fairness. If robotic systems inherit human gender biases in how they are perceived, then deploying them in high-stakes contexts (education, healthcare, hiring support) risks perpetuating and amplifying those biases at scale. Understanding the mechanism — whether bias is triggered by gendered voice, name, or both — is a prerequisite for designing mitigation strategies.

The work complements my research in ethical reinforcement learning: both projects are fundamentally about how AI systems interact with human values and whether those interactions can be made fairer and more transparent.

Status

The simulation environment and behavioral scripts are complete. Study design and analysis are in progress as part of the ECE 787 course deliverable.

Assets